In the 1980s, microprocessor speeds surged exponentially compared to memory access times. This growing gap demanded a solution to boost memory efficiency across the system, paving the way for CPU cache development.

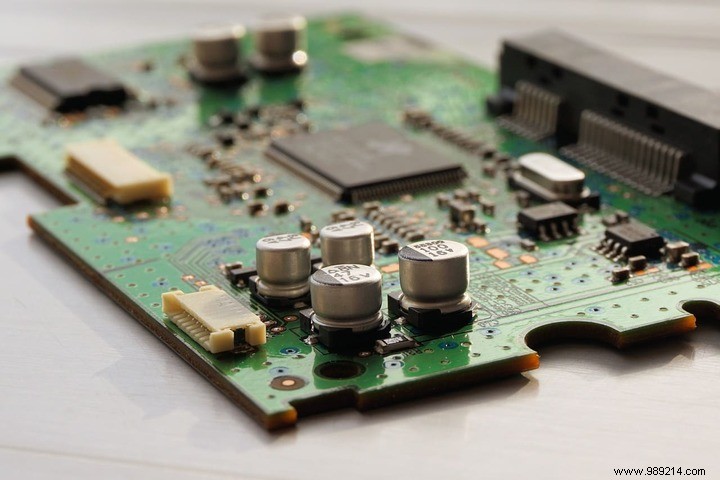

CPU cache represents a pivotal innovation in computing history. Acting as high-speed memory tucked inside the processor, it bridges the speed disparity between the CPU and main RAM, delivering data where it's needed fastest.

The smallest and quickest is the L1 cache, split into instruction cache—holding CPU operations—and data cache—storing the operands for those operations.

Next comes the L2 cache, larger at 256 KB to 8 MB but slightly slower, prefetching data the CPU is likely to need soon.

The L3 cache is the biggest and slowest of the on-chip trio, ranging from 4 MB to 50 MB, shared across cores in modern multicore processors.

When a program launches, data streams from RAM into the L3, then L2, and finally L1 caches. As execution proceeds, the CPU hunts for data starting at L1 and escalating outward if needed. A cache hit means instant access; a miss triggers a fetch from deeper levels or RAM.

Latency—the delay in data retrieval—is key to performance. L1 offers the lowest latency for blazing speed. Misses cascade delays, forcing searches through slower tiers.

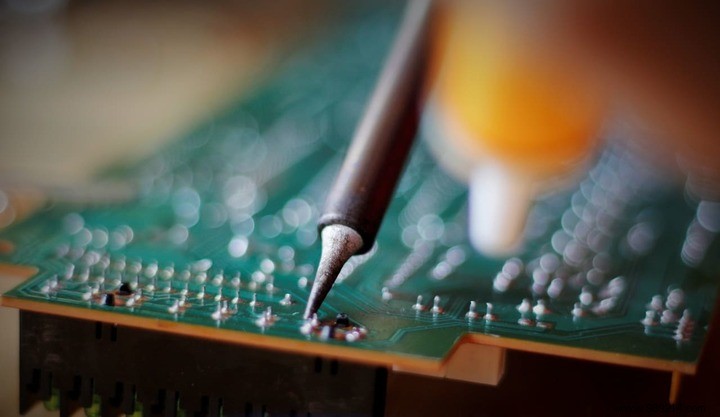

Advancements in shrinking transistor sizes enable denser on-die cache placement, slashing physical distances and latencies further.

Though rarely spotlighted in marketing, cache specs matter. Opt for processors with ample, low-latency cache to run programs smoother and faster.