The race to shrink computer chips has intensified since the dawn of silicon transistors. Hardware innovators have relentlessly packed more transistors into tinier spaces. In 2014, Intel unveiled processors with transistors 6,000 times smaller than a human hair's diameter. Yet, molecular-scale transistors remain elusive—until now. On June 17, 2016, Peking University researchers in Beijing demonstrated a viable molecular switch, bringing this vision closer to reality. As the push for smaller hardware accelerates, let's examine its implications and the formidable obstacles ahead.

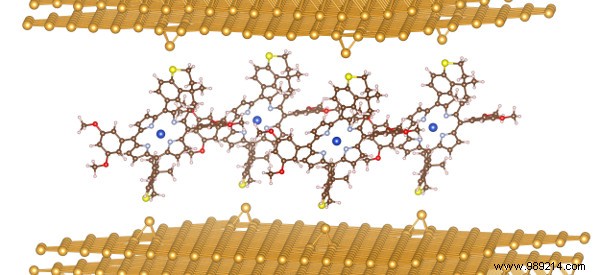

While shrinking transistors maximizes density, it's not the only path forward. Peking University experts developed an efficient molecular switch with a one-year lifespan—far surpassing prior versions that lasted mere hours. Even more groundbreaking: it communicates via photons, not electrons. Photons travel up to 100 times faster than electromagnetic waves, enabling denser packing and unprecedented speed boosts that exceed Gordon Moore's original vision.

At the atomic and molecular scales, instability reigns. Electromagnetic fields can subtly alter atomic structures in metals and conductors, mimicking false signals. Microscopic material grains may trigger transistor failures. The Beijing team's switch toggles over 100 times with one-year durability—impressive, but far short of a decade-long device. Isolating the micro-electronic environment for long-term stability is paramount.

Even with a robust switch, integration into manufacturing poses massive hurdles. Integrated circuits dominate hardware communication; molecular switches defy seamless incorporation. Moreover, reading data in molecular interstices demands energy-intensive specialized setups.

Pursuing molecule-sized switches tantalizes with revolutionary potential—if challenges like cryogenic data reading, bridging molecular-electromagnetic gaps, and extending lifespans are conquered. Success could render silicon chips obsolete.

When do you think we'll surmount these barriers? Share in the comments!