As someone who's worked with computing fundamentals for years, I've seen how number systems underpin everything from basic calculations to complex programming. While we humans count in decimal from 1 to 10, computers rely on binary. Let's break down the differences clearly.

From childhood, we learn to count on our fingers—ten fingers for our base-10 decimal system. Beyond 10, we carry over, just like in everyday math. This familiar system is universal across cultures for human communication.

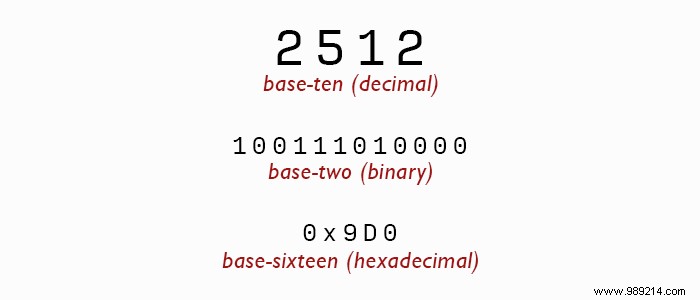

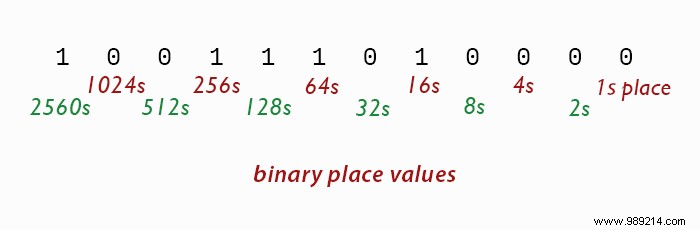

Computers, however, use binary (base-2) because hardware is simpler with just two states: on (1) or off (0). Each position represents powers of 2—1, 2, 4, 8, 16, and so on. To convert binary to decimal, multiply each digit by its positional value and sum them up. It's the same principle as decimal but lightning-fast in circuits.

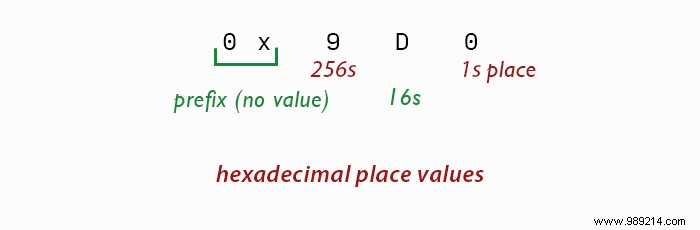

Hexadecimal (base-16) expands this with 16 symbols: 0-9 and A-F for 10-15. You'll spot it in web color codes (#FF0000 for red) or prefixed with 0x in code. Each position is 16 times the previous, making it compact—one hex digit equals four binary bits, and two make a byte.

One system for all would be ideal, but each serves a purpose. Decimal suits human intuition. Binary matches electronic states perfectly. Hexadecimal bridges the gap, letting developers read binary data efficiently without endless 1s and 0s—essential for memory addresses and registers.