Google has intensified its search innovations since the 2015 reorganization. On September 22, 2016, it released open-source software for detecting objects in images and generating automatic captions. Lacking human creativity, the Inception V3 image encoder nonetheless represents a breakthrough in AI vision, extending beyond basic recognition to enable more advanced intelligence.

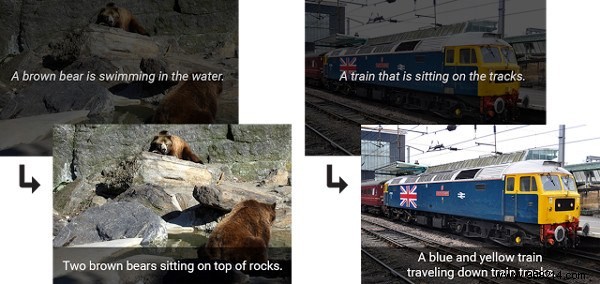

While every picture is worth a thousand words, Inception V3 provides concise, factual descriptions, showing a solid grasp of image contents.

This foundational visual understanding advances machines toward processing stimuli like simple aquatic animals—a major upgrade from today's bots, which struggle with basic environmental awareness outside controlled settings.

Achieving 93% accuracy in image captioning overcomes key computer vision hurdles. Yet, Inception V3 depends on human-trained data and falls short on abstract perception.

Can it sense anger in a face, detect a fight, or understand why someone cries? No. Human vision extrapolates context and triggers emotional responses—finding flowers beautiful or fries irresistible—that elude machines without rigid programming. True emotional intelligence may remain uniquely human.

Will AI ever marvel at a rose petal under a microscope? Share your thoughts in the comments!